TechCrunch

TechCrunch

“Pods,” as they’ve been dubbed, straddle the line between real and fake engagement, making them tricky to detect or take action against. And while they used to be a niche threat (and still are compared with fake account and bot activity), the practice is growing in volume and efficacy.

Pods are easily found via searching online, and some are open to the public. The most common venue for them is Telegram, as it’s more or less secure and has no limit to the number of people who can be in a channel. Posts linked in the pod are liked and commented on by others in the group, with the effect of those posts being far more likely to be spread widely by Instagram’s recommendation algorithms, boosting organic engagement.

Reciprocity as a service

The practice of groups mutually liking one another’s posts is called reciprocity abuse, and social networks are well aware of it, having removed setups of this type before. But the practice has never been studied or characterized in detail, the team from NYU’s Tandon School of Engineering explained.

“In the past they’ve probably been focused more on automated threats, like giving credentials to someone to use, or things done by bots,” said lead author of the study Rachel Greenstadt. “We paid attention to this because it’s a growing problem, and it’s harder to take measures against.”

On a small scale it doesn’t sound too threatening, but the study found nearly 2 million posts that had been manipulated by this method, with more than 100,000 users taking part in pods. And that’s just the ones in English, found using publicly available data. The paper describing the research was published in the Proceedings of the World Wide Web Conference and can be read here.

Importantly, the reciprocal liking does more than inflate apparent engagement. Posts submitted to pods got large numbers of artificial likes and comments, yes, but that activity deceived Instagram’s algorithm into promoting them further, leading to much more engagement even on posts not submitted to the pod.

When contacted for comment, Instagram initially said that this activity “violates our policies and we have numerous measures in place to stop it,” and said that the researchers had not collaborated with the company on the research.

In fact the team was in contact with Instagram’s abuse team from early on in the project, and it seems clear from the study that whatever measures are in place have not, at least in this context, had the desired effect. I pointed this out to the representative and will update this post if I hear back with any more information.

“It’s a grey area”

But don’t reach for the pitchforks just yet — the fact is this kind of activity is remarkably hard to detect, because really it’s identical in many ways to a group of friends or like-minded users engaging with each others’ content in exactly the way Instagram would like. And really, even classifying the behavior as abuse isn’t so simple.

“It’s a grey area, and I think people on Instagram think of it as a grey area,” said Greenstadt. “Where does it end? If you write an article and post it on social media and send it to friends, and they like it, and they sometimes do that for you, are you part of a pod? The issue here is not necessarily that people are doing this, but how the algorithm should treat this action, in terms of amplifying or not amplifying that content.”

Obviously if people are doing it systematically with thousands of users and even charging for access (as some groups do), that amounts to abuse. But drawing the line isn’t easy.

More important is that the line can’t be drawn unless you first define the behavior, which the researchers did by carefully inspecting the differences in patterns of likes and comments on pod-boosted and ordinary posts.

“They have different linguistic signatures,” explained co-author Janith Weerasinghe. “What words they use, the timing patterns.”

As you might expect, strangers obligated to comment on posts they don’t actually care about tend to use generic language, saying things like “nice pic” or “wow” rather than more personal remarks. Some groups actually warn against this, Weerasinghe said, but not many.

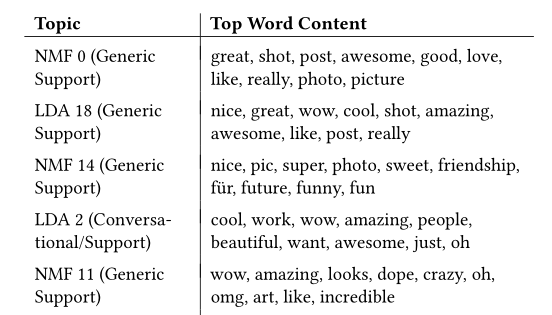

The list of top words used reads, predictably, like the comment section on any popular post, though perhaps that speaks to a more general lack of expressiveness on Instagram than anything else:

But statistical analysis of thousands of such posts, both pod-powered and normal, showed a distinctly higher prevalence of “generic support” comments, often showing up in a predictable pattern.

But statistical analysis of thousands of such posts, both pod-powered and normal, showed a distinctly higher prevalence of “generic support” comments, often showing up in a predictable pattern.

This data was used to train a machine learning model, which when set loose on posts it had never seen, was able to identify posts given the pod treatment with as high as 90% accuracy. This could help surface other pods — and make no mistake, this is only a small sample of what’s out there.

“We got a pretty good sample for the time period of the easily accessible, easily findable pods,” said Greenstadt. “The big part of the ecosystem that we’re missing is pods that are smaller but more lucrative, that have to have a certain presence on social media already to join. We’re not influencers, so we couldn’t really measure that.”

The numbers of pods and the posts they manipulate has grown steadily over the last two years. About 7,000 posts were found during March of 2017. A year later that number had jumped to nearly 55,000. March of 2019 saw over 100,000, and the number continued to increase through the end of the study’s data. It’s safe to say that pods are now posting over 4,000 times a day — and each one is getting a large amount of engagement, both artificial and organic. Pods now have 900 users on average, and some had over 10,000.

You may be thinking: “If a handful of academics using publicly available APIs and Google could figure this out, why hasn’t Instagram?”

As mentioned before, it’s possible the teams there have simply not considered this to be a major threat and consequently have not created policies or tools to prevent it. Rules proscribing using a “third party app or service to generate fake likes, follows, or comments” arguably don’t apply to these pods, since in many ways they’re identical to perfectly legitimate networks of users (though Instagram clarified that it considers pods as violating the rule). And certainly the threat from fake accounts and bots is of a larger scale.

And while it’s possible that pods could be used as a venue for state-sponsored disinformation or other political purposes, the team didn’t notice anything happening along those lines (though they were not looking for it specifically). So for now the stakes are still relatively small.

That said, Instagram clearly has access to data that would help to define and detect this kind of behavior, and its policies and algorithms could be changed to accommodate it. No doubt the NYU researchers would love to help.

]]>